How to Get Your Coding Agent to One-Shot Complex Features

My coding agent failed a complex Shopify integration, even after trying five times. Then I tried one thing differently, and it one-shotted the full feature. Here's the technique.

AI DevX Engineer

AI DevX Engineer at SurePath AI. Former Amazon engineer. I write about using AI effectively for work.

My coding agent failed a complex Shopify integration, even after trying five times. Then I tried one thing differently, and it one-shotted the full feature. Here's the technique.

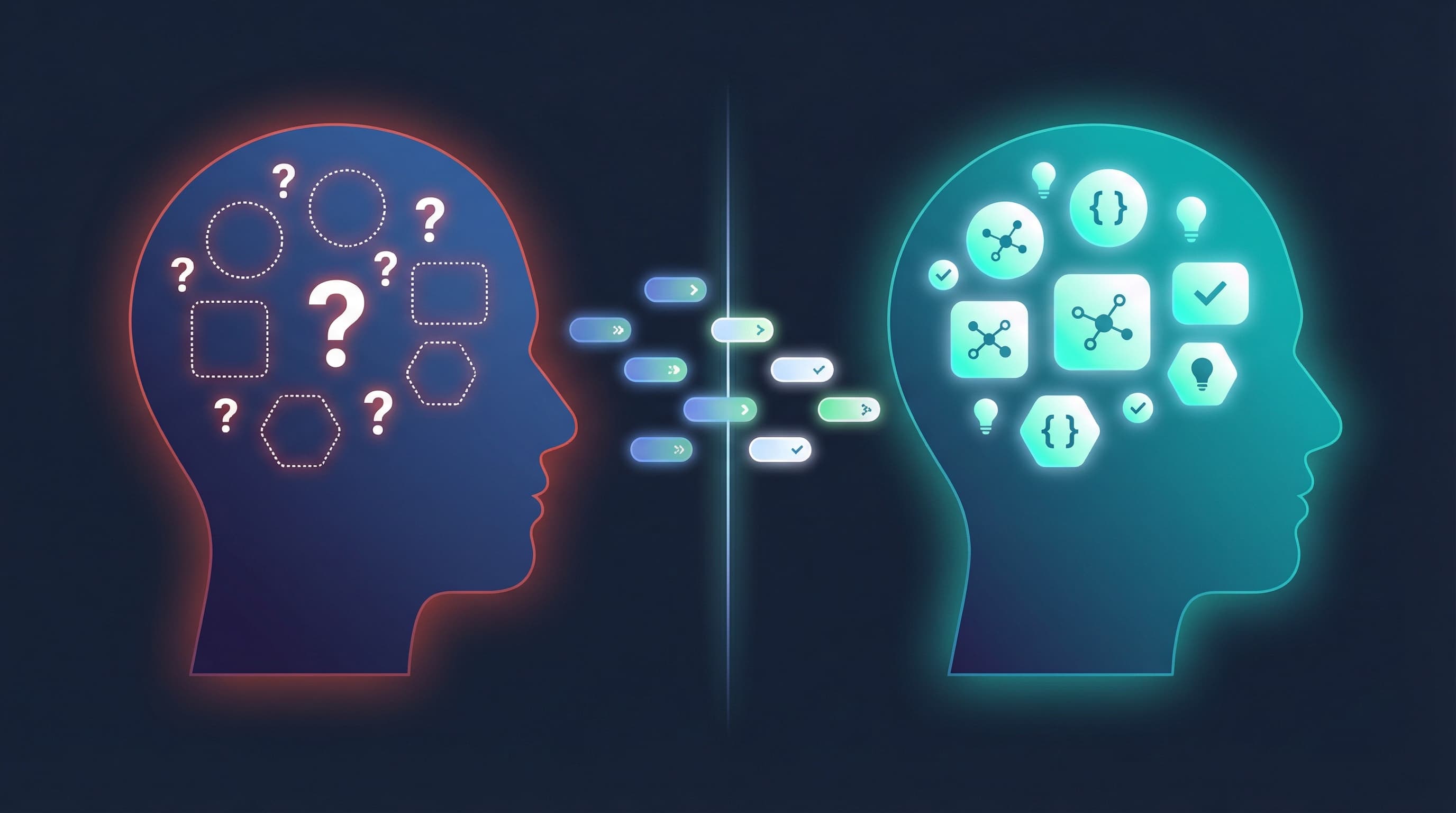

AI is not magic - it's a pattern-matching tool. Understanding how LLMs work explains why your experience sucked and reveals when coding agents actually shine.

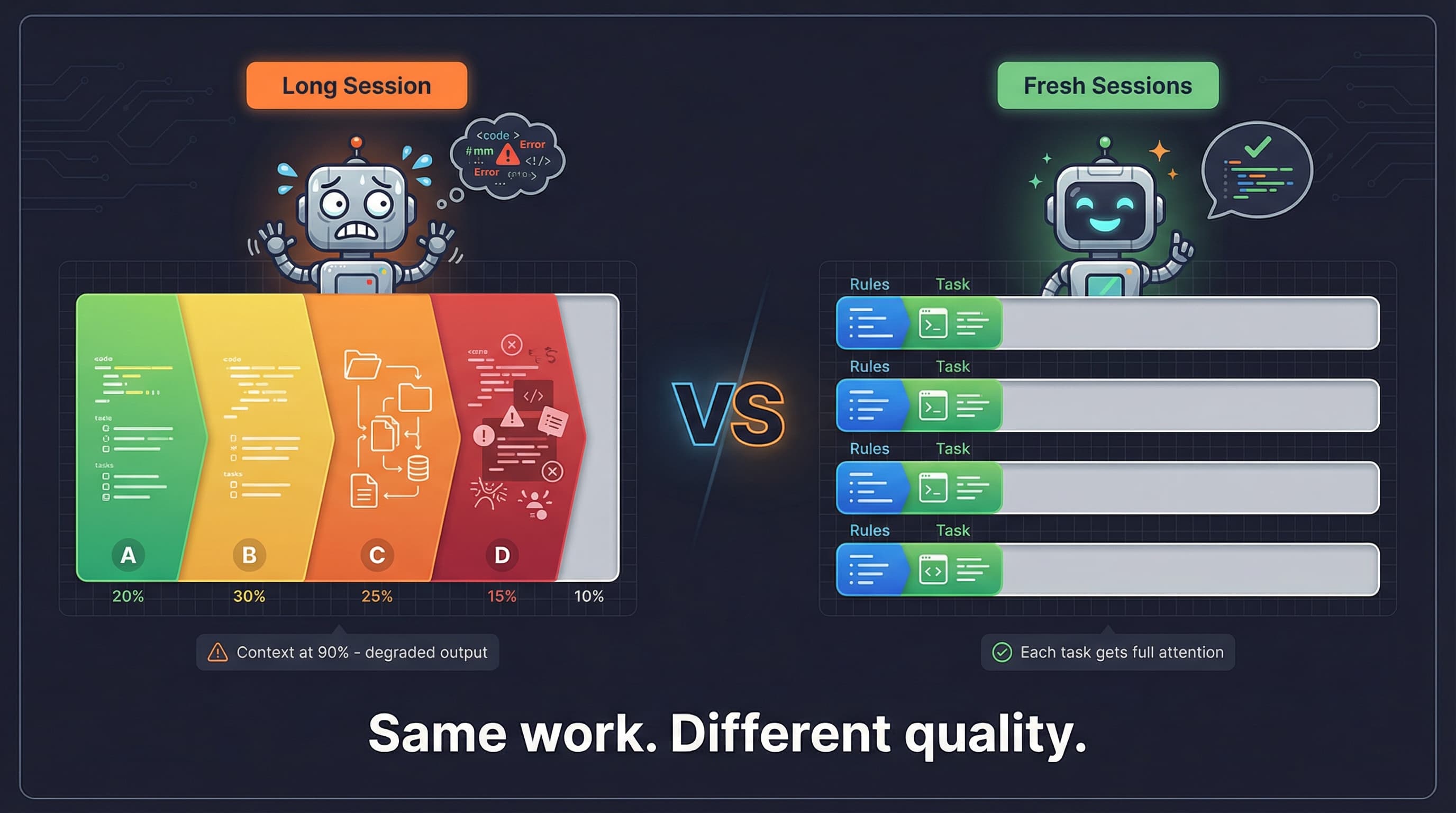

Why fresh context beats accumulated context for coding agents. Research on attention degradation and practical strategies for better AI-assisted development.

Stop writing comprehensive prompts. Let Claude Code interview you instead. Here are 7 ways I use AskUserQuestionTool to improve my coding agent's output quality.

Running multiple Claude Code sessions? Stop tab-hunting. This 5-minute tmux setup keeps every project organized in one place.

Use read time qualifiers like "make this a 3 minute read" instead of vague words like "concise" or "detailed" to control AI output length.

When your coding agent makes a mistake, use that moment to upgrade the agent, with the agent itself. One sentence, 30 seconds, permanent fix.

The more your agent can run code and feed output back to itself, the less you do. Learn how to close feedback loops and let your coding agent iterate autonomously.

A structured workflow for using Claude Code with sub-agents to catch issues, maintain code quality, and ship faster. Plan reviews, code reviews, and persistent task management.

AI is a text predictor, not a mind reader. Structure your prompts with the RIPE framework (Role, Info, Process, Expected output) to get useful results instead of generic slop.